本文环境:VMware创建的虚拟机

操作系统:ubuntu22.04.4

远程连接工具xshell8

主机环境准备

1.主机

| 主机名 | ip | 内容 | 配置(现实系统1t,数据2t) |

| master141 | 10.0.0.141 | api-server,control manager,scheduler,etcd | 2核,4G,60G |

| master142 | 10.0.0.142 | api-server,control manager,scheduler,etcd | 2核,4G,60G |

| master143 | 10.0.0.143 | api-server,control manager,scheduler,etcd | 2核,4G,60G |

| worker144 | 10.0.0.244 | kubelet,kube-proxy | 2核,4G,60G |

| worker145 | 10.0.0.245 | kubelet,kube-proxy | 2核,4G,60G |

| apiserver-lb140 | 10.0.0.140 | apiserver的负载均衡器IP地址 | 2核,4G,60G |

2.各节点安装常用的软件包

apt update

apt -y install bind9-utils expect rsync jq psmisc net-tools lvm2 vim unzip rename3.在master141执行

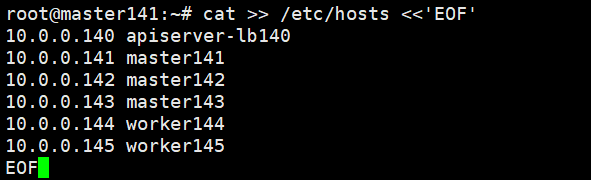

cat >> /etc/hosts <<'EOF'

10.0.0.140 apiserver-lb140

10.0.0.141 master141

10.0.0.142 master142

10.0.0.143 master143

10.0.0.144 worker144

10.0.0.145 worker145

EOF

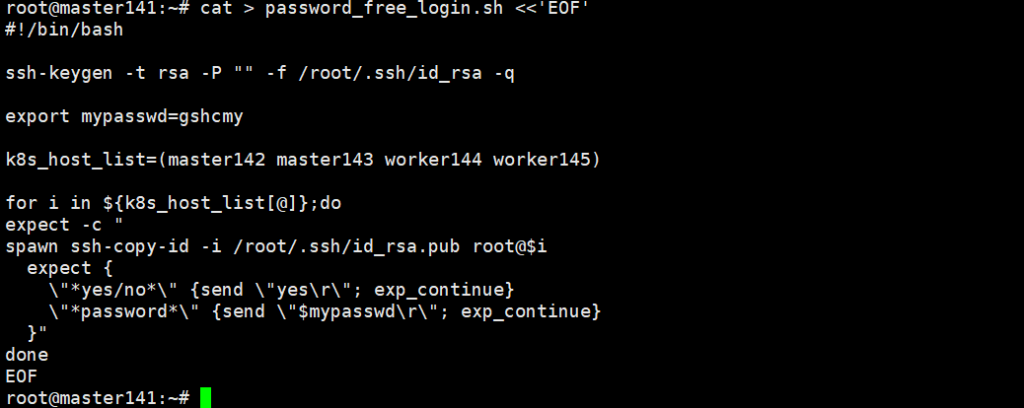

cat > password_free_login.sh <<'EOF'

#!/bin/bash

ssh-keygen -t rsa -P "" -f /root/.ssh/id_rsa -q

export mypasswd=gshcmy

k8s_host_list=(master142 master143 worker144 worker145)

for i in ${k8s_host_list[@]};do

expect -c "

spawn ssh-copy-id -i /root/.ssh/id_rsa.pub root@$i

expect {

\"*yes/no*\" {send \"yes\r\"; exp_continue}

\"*password*\" {send \"$mypasswd\r\"; exp_continue}

}"

done

EOF

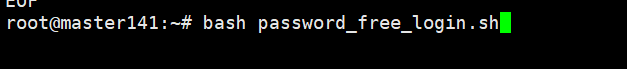

bash password_free_login.sh

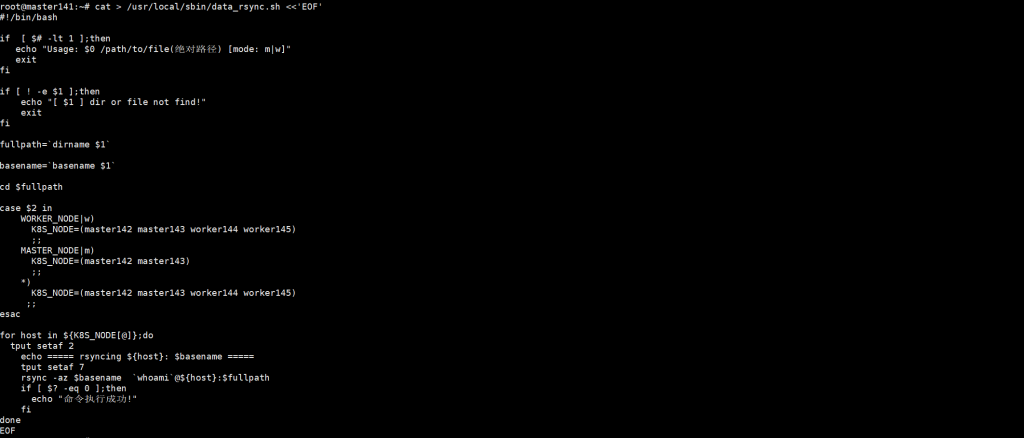

cat > /usr/local/sbin/data_rsync.sh <<'EOF'

#!/bin/bash

if [ $# -lt 1 ];then

echo "Usage: $0 /path/to/file(绝对路径) [mode: m|w]"

exit

fi

if [ ! -e $1 ];then

echo "[ $1 ] dir or file not find!"

exit

fi

fullpath=`dirname $1`

basename=`basename $1`

cd $fullpath

case $2 in

WORKER_NODE|w)

K8S_NODE=(master142 master143 worker144 worker145)

;;

MASTER_NODE|m)

K8S_NODE=(master142 master143)

;;

*)

K8S_NODE=(master142 master143 worker144 worker145)

;;

esac

for host in ${K8S_NODE[@]};do

tput setaf 2

echo ===== rsyncing ${host}: $basename =====

tput setaf 7

rsync -az $basename `whoami`@${host}:$fullpath

if [ $? -eq 0 ];then

echo "命令执行成功!"

fi

done

EOF

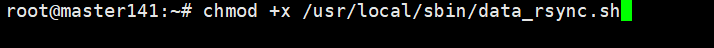

chmod +x /usr/local/sbin/data_rsync.sh

data_rsync.sh /etc/hosts #密码是root用户的密码

4.所有节点环境优化

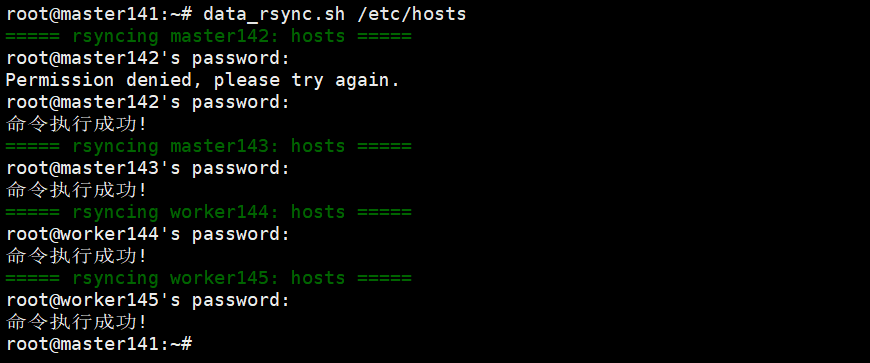

root@master141:~# systemctl disable --now NetworkManager ufw

root@master142:~# systemctl disable --now NetworkManager ufw

root@master143:~# systemctl disable --now NetworkManager ufw

root@master144:~# systemctl disable --now NetworkManager ufw

root@master145:~# systemctl disable --now NetworkManager ufw

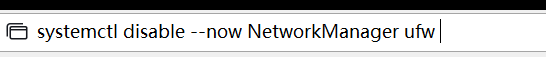

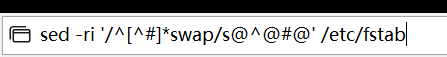

下图是撰写栏Xshell 8中位于:查看→撰写→撰写栏 点击下图最左边图标可以选择,下图选择为全部Xshell,使用这个可以不用一个个去写

swapoff -a && sysctl -w vm.swappiness=0

sed -ri '/^[^#]*swap/s@^@#@' /etc/fstab

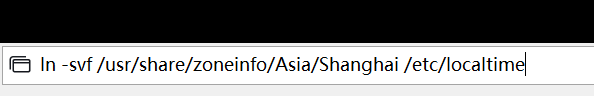

ln -svf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

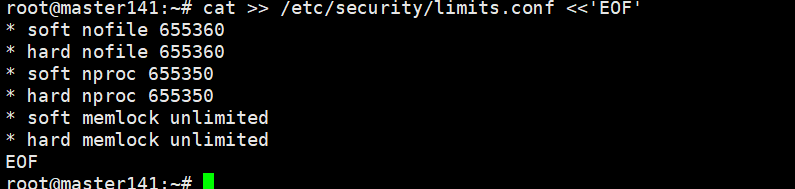

cat >> /etc/security/limits.conf <<'EOF'

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

每个节点都要执行

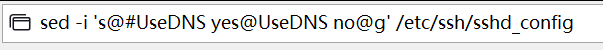

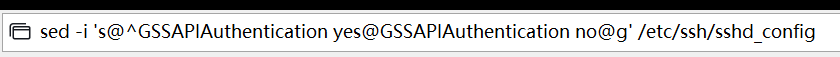

sed -i 's@#UseDNS yes@UseDNS no@g' /etc/ssh/sshd_config

sed -i 's@^GSSAPIAuthentication yes@GSSAPIAuthentication no@g' /etc/ssh/sshd_config

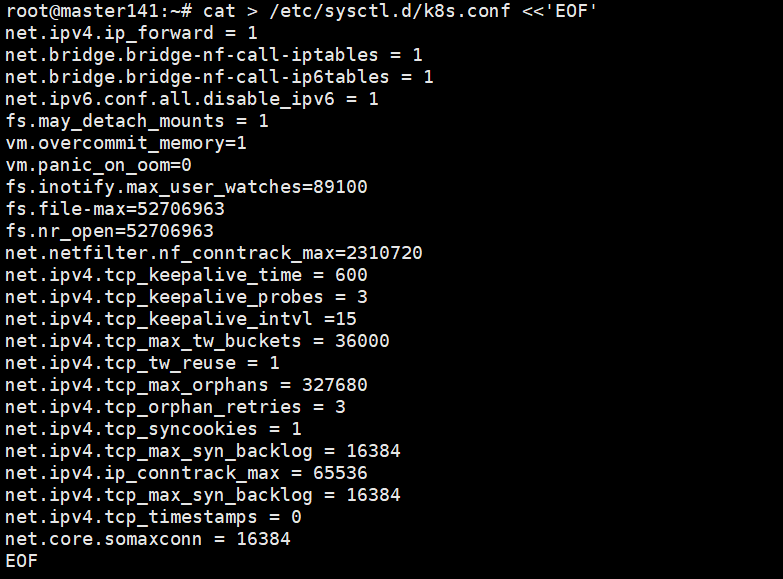

cat > /etc/sysctl.d/k8s.conf <<'EOF'

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv6.conf.all.disable_ipv6 = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

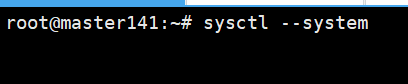

所有节点

sysctl --system

所有节点

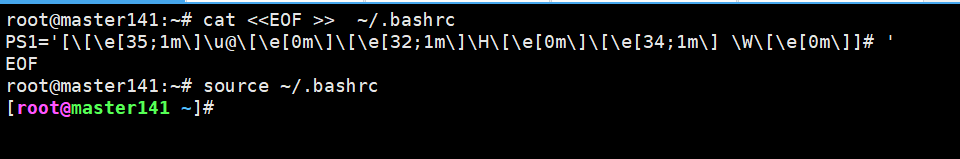

cat <<EOF >> ~/.bashrc

PS1='[\[\e[35;1m\]\u@\[\e[0m\]\[\e[32;1m\]\H\[\e[0m\]\[\e[34;1m\] \W\[\e[0m\]]# '

EOF

修改颜色顺序为紫,绿,蓝source ~/.bashrc

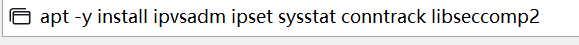

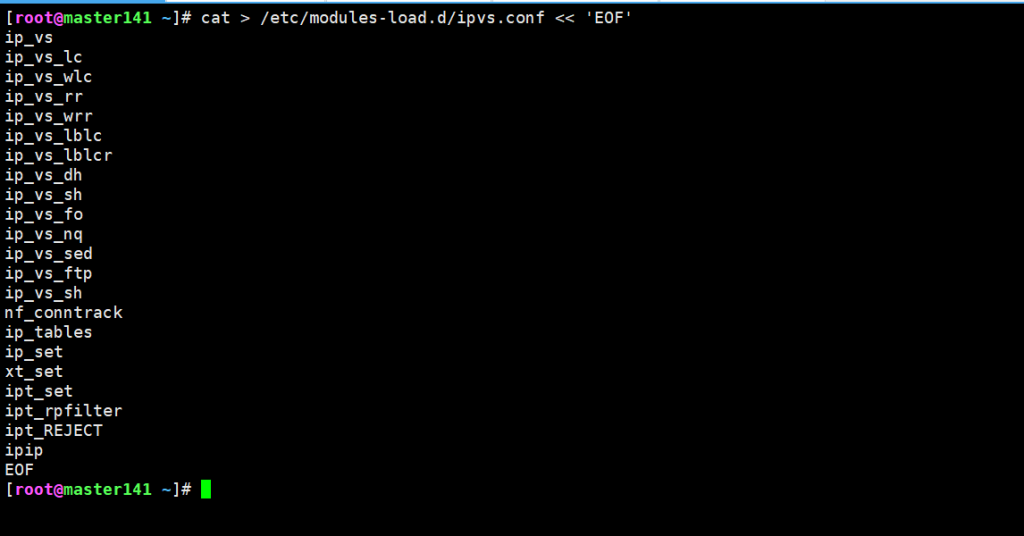

5.所有节点安装ipvsadm以实现kube-proxy负载均衡

apt -y install ipvsadm ipset sysstat conntrack libseccomp2

cat > /etc/modules-load.d/ipvs.conf << 'EOF'

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

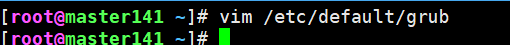

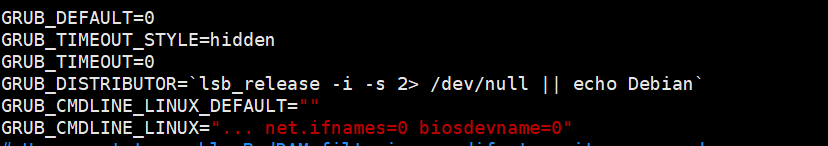

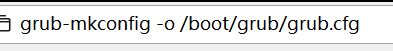

vim /etc/default/grub

所有节点:下面两个图片是执行的命令和要改的内容,只在二图最后一行

... net.ifnames=0 biosdevname=0

grub-mkconfig -o /boot/grub/grub.cfg

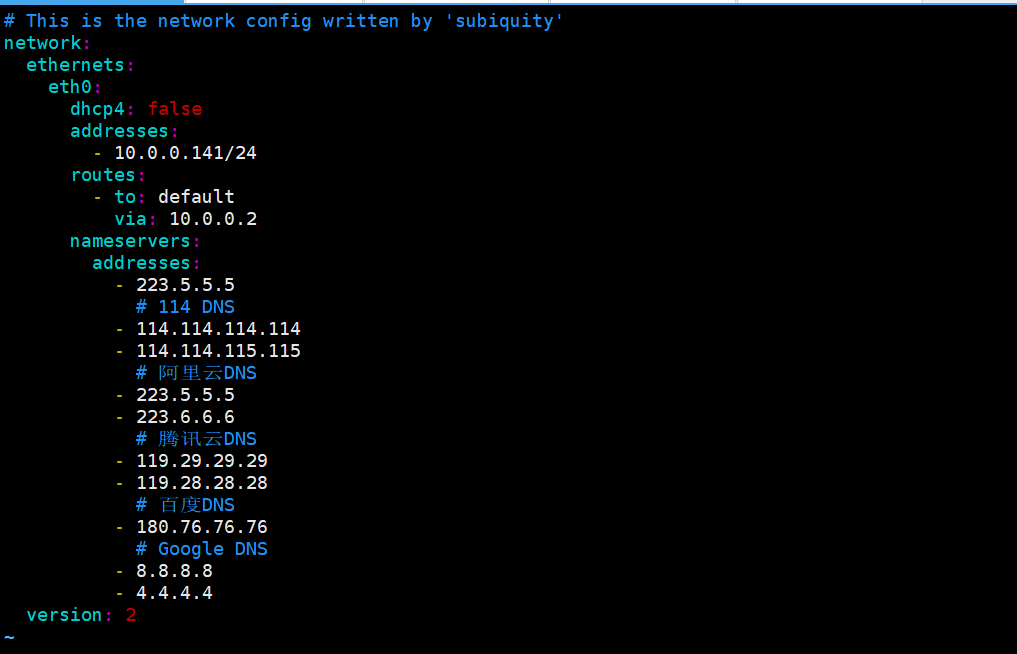

vim /etc/netplan/00-installer-config.yaml #下面是内容141-145都要network:

ethernets:

eth0:

dhcp4: false

addresses:

- 10.0.0.141/24

routes:

- to: default

via: 10.0.0.2

nameservers:

addresses:

- 223.5.5.5

# 114 DNS

- 114.114.114.114

- 114.114.115.115

# 阿里云DNS

- 223.5.5.5

- 223.6.6.6

# 腾讯云DNS

- 119.29.29.29

- 119.28.28.28

# 百度DNS

- 180.76.76.76

# Google DNS

- 8.8.8.8

- 4.4.4.4

version: 2

reboot

重启

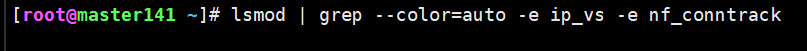

lsmod | grep --color=auto -e ip_vs -e nf_conntrack

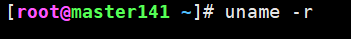

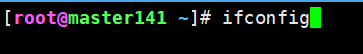

uname -r

ifconfig

安装containerd组件 (所有节点)

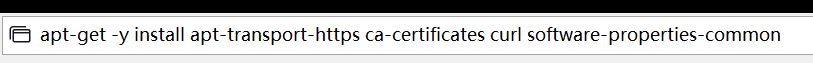

1.安装系统工具

apt-get -y install apt-transport-https ca-certificates curl software-properties-common

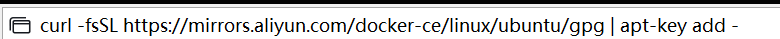

2.安装GPG证书

curl -fsSL https://mirrors.aliyun.com/docker-ce/linux/ubuntu/gpg | apt-key add -

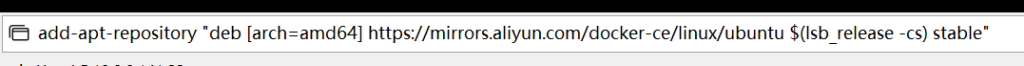

3.写入软件源信息

add-apt-repository "deb [arch=amd64] https://mirrors.aliyun.com/docker-ce/linux/ubuntu $(lsb_release -cs) stable"

4.更新软件源

apt-get update

5.安装containerd组件

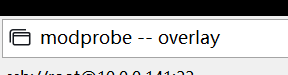

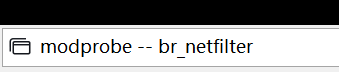

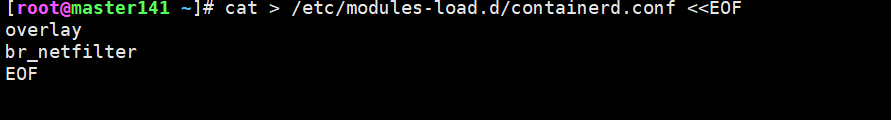

apt-get -y install containerd.io6.配置containerd需要的模块

modprobe -- overlay

modprobe -- br_netfilter

cat > /etc/modules-load.d/containerd.conf <<EOF

overlay

br_netfilter

EOF

所有节点

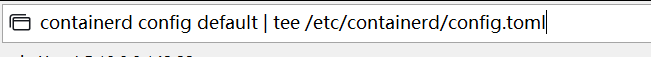

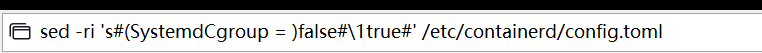

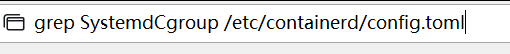

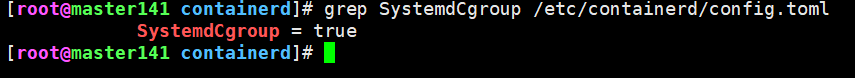

7.修改containerd的配置文件

containerd config default | tee /etc/containerd/config.toml

sed -ri 's#(SystemdCgroup = )false#\1true#' /etc/containerd/config.toml

grep SystemdCgroup /etc/containerd/config.toml

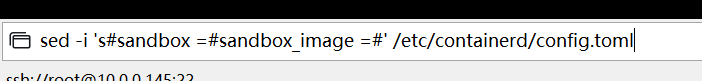

修改pause的基础镜像名称

sed -i 's#registry.k8s.io/pause:3.6#registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.7#' /etc/containerd/config.toml

或者

sed -i 's#registry.k8s.io/pause:3.10.1#registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.7#' /etc/containerd/config.toml

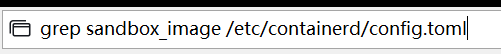

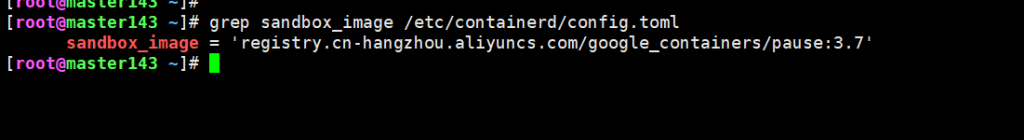

sed -i 's#sandbox =#sandbox_image =#' /etc/containerd/config.toml

grep sandbox_image /etc/containerd/config.toml

所有节点启动

systemctl daemon-reload

systemctl enable --now containerd

systemctl status containerd

cat > /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

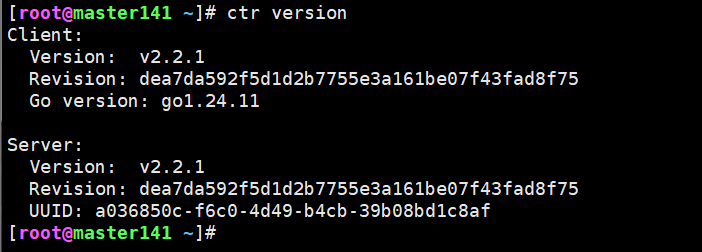

查看containerd的版本

ctr version

containerd的名称空间,镜像和容器,任务管理

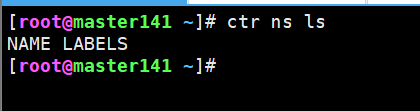

查看当前名称空间

ctr ns ls

名称空间

创建名称空间

ctr ns c gshcmy-k8s

ctr ns ls

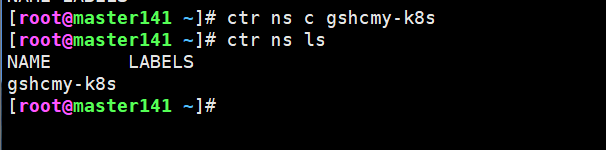

删除名称空间(删除的名称空间必须为空,否则无法删除)

ctr ns rm gshcmy-k8s

ctr ns ls

镜像管理

将镜像拉取到名称空间(下方为练习镜像没有的)

ctr ns c gshcmy-k8s

ctr ns ls

ctr image pull registry.cn-hangzhou.aliyuncs.com/gshcmy-k8s/apps:v1

ctr i ls

ctr -n default i ls

ctr -n gshcmy-k8s i ls

ctr -n gshcmy-k8s image pull registry.cn-hangzhou.aliyuncs.com/gshcmy-k8s/apps:v2

ctr -n gshcmy-k8s i ls删除镜像

ctr -n default i ls

ctr i rm registry.cn-hangzhou.aliyuncs.com/gshcmy-k8s/apps:v1容器管理

运行容器

ctr -n gshcmy-k8s run registry.cn-hangzhou.aliyuncs.com/gshcmy-k8s/apps:v2 haha查看容器列表

[root@master141 ~]# ctr -n gshcmy-k8s c ls

CONTAINER IMAGE RUNTIME

haha registry.cn-hangzhou.aliyuncs.com/gshcmy-k8s/apps:v2 io.containerd.runc.v2 查看运行的容器ID

[root@master141 ~]# ctr -n gshcmy-k8s t ls

TASK PID STATUS

haha 19041 RUNNING

[root@master141 ~]#

连接正在运行的容器

[root@master141 ~]# ctr -n gshcmy-k8s t exec -t --exec-id 2024 haha sh

/ # ifconfig

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

/ #

杀死运行的容器

[root@master141 ~]# ctr -n gshcmy-k8s t kill haha

[root@master141 ~]# ctr -n gshcmy-k8s t ls

TASK PID STATUS 删除容器

[root@master141 ~]# ctr -n gshcmy-k8s c ls

CONTAINER IMAGE RUNTIME

haha registry.cn-hangzhou.aliyuncs.com/gshcmy-k8s/apps:v2 io.containerd.runc.v2

[root@master141 ~]# ctr -n gshcmy-k8s c rm haha

[root@master141 ~]# ctr -n gshcmy-k8s c ls

CONTAINER IMAGE RUNTIME 安装etcd程序(需要科学上网)

wget https://github.com/etcd-io/etcd/releases/download/v3.5.14/etcd-v3.5.14-linux-amd64.tar.gz解压etcd的二进制程序包

tar -xf etcd-v3.5.14-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin etcd-v3.5.14-linux-amd64/etcd{,ctl}

ll /usr/local/bin/

[root@master141 ~]# etcdctl version #查看版本

etcdctl version: 3.5.14

API version: 3.5将软件包下发到所有节点

[root@master141 ~]# data_rsync.sh /usr/local/bin/etcd m

===== rsyncing master142: etcd =====

root@master142's password:

命令执行成功!

===== rsyncing master143: etcd =====

root@master143's password:

命令执行成功!

[root@master141 ~]# data_rsync.sh /usr/local/bin/etcdctl m

===== rsyncing master142: etcdctl =====

root@master142's password:

命令执行成功!

===== rsyncing master143: etcdctl =====

root@master143's password:

命令执行成功!

[root@master141 ~]# 安装k8s程序

wget https://dl.k8s.io/v1.30.2/kubernetes-server-linux-amd64.tar.gz解压K8S的二进制程序包

解压

tar -xf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

查看版本

[root@master141 ~]# kubelet --version

Kubernetes v1.30.2

[root@master141 ~]#

分发软件包

[root@master141 ~]# data_rsync.sh /usr/local/bin/kube-apiserver m

[root@master141 ~]# data_rsync.sh /usr/local/bin/kube-scheduler m

[root@master141 ~]# data_rsync.sh /usr/local/bin/kube-controller-manager m

[root@master141 ~]# data_rsync.sh /usr/local/bin/kubectl m

[root@master141 ~]# data_rsync.sh /usr/local/bin/kubelet w

[root@master141 ~]# data_rsync.sh /usr/local/bin/kube-proxy w生成etcd证书文件

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.5/cfssl-certinfo_1.6.5_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.5/cfssljson_1.6.5_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.5/cfssl_1.6.5_linux_amd64

下载后拍摄快照重命名cfssl的版本号信息

[root@master141 ~]# ll cfssl*

-rw-r--r-- 1 root root 11890840 Jun 15 2024 cfssl_1.6.5_linux_amd64

-rw-r--r-- 1 root root 8413336 Jun 15 2024 cfssl-certinfo_1.6.5_linux_amd64

-rw-r--r-- 1 root root 6205592 Jun 15 2024 cfssljson_1.6.5_linux_amd64[root@master141 ~]# rename -v "s/_1.6.5_linux_amd64//g" cfssl*

cfssl_1.6.5_linux_amd64 renamed as cfssl

cfssl-certinfo_1.6.5_linux_amd64 renamed as cfssl-certinfo

cfssljson_1.6.5_linux_amd64 renamed as cfssljson

[root@master141 ~]#[root@master141 ~]# ll cfssl*

-rw-r--r-- 1 root root 11890840 Jun 15 2024 cfssl

-rw-r--r-- 1 root root 8413336 Jun 15 2024 cfssl-certinfo

-rw-r--r-- 1 root root 6205592 Jun 15 2024 cfssljson

将cfssl证书拷贝到环境变量并授权执行权限

[root@master141 ~]# mv cfssl* /usr/local/bin/

[root@master141 ~]# chmod +x /usr/local/bin/cfssl*

[root@master141 ~]# ll /usr/local/bin/cfssl*

-rwxr-xr-x 1 root root 11890840 Jun 15 2024 /usr/local/bin/cfssl*

-rwxr-xr-x 1 root root 8413336 Jun 15 2024 /usr/local/bin/cfssl-certinfo*

-rwxr-xr-x 1 root root 6205592 Jun 15 2024 /usr/local/bin/cfssljson*

master141节点创建etcd证书存储目录

[root@master141 ~]# mkdir -pv /gshcmy/certs/{etcd,pki}/ && cd /gshcmy/certs/pki/[root@master141 pki]# cat > etcd-ca-csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "etcd",

"OU": "Etcd Security"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF生成etcd CA证书和CA证书的key

[root@master141 pki]# cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /gshcmy/certs/etcd/etcd-ca

2025/12/23 16:58:16 [INFO] generating a new CA key and certificate from CSR

2025/12/23 16:58:16 [INFO] generate received request

2025/12/23 16:58:16 [INFO] received CSR

2025/12/23 16:58:16 [INFO] generating key: rsa-2048

2025/12/23 16:58:16 [INFO] encoded CSR

2025/12/23 16:58:16 [INFO] signed certificate with serial number 670358402125359505823061965820939276734710307322

[root@master141 pki]# pwd

/gshcmy/certs/pki

[root@master141 pki]# ll /gshcmy/certs/etcd/

total 20

drwxr-xr-x 2 root root 4096 Dec 23 16:58 ./

drwxr-xr-x 4 root root 4096 Dec 23 16:57 ../

-rw-r--r-- 1 root root 1050 Dec 23 16:58 etcd-ca.csr

-rw------- 1 root root 1675 Dec 23 16:58 etcd-ca-key.pem

-rw-r--r-- 1 root root 1318 Dec 23 16:58 etcd-ca.pem

[root@master141 pki]#master141节点基于自建ca证书颁发etcd证书

[root@master141 pki]# cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "876000h"

}

}

}

}

EOF

[root@master141 pki]# cat > etcd-csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "etcd",

"OU": "Etcd Security"

}

]

}

EOF

[root@master141 pki]# cfssl gencert \

-ca=/gshcmy/certs/etcd/etcd-ca.pem \

-ca-key=/gshcmy/certs/etcd/etcd-ca-key.pem \

-config=ca-config.json \

--hostname=127.0.0.1,master141,master142,master143,10.0.0.141,10.0.0.142,10.0.0.143 \

--profile=kubernetes \

etcd-csr.json | cfssljson -bare /gshcmy/certs/etcd/etcd-server

[root@master141 pki]# ll /gshcmy/certs/etcd/etcd-server*

-rw-r--r-- 1 root root 1119 Dec 23 17:07 /gshcmy/certs/etcd/etcd-server.csr

-rw------- 1 root root 1675 Dec 23 17:07 /gshcmy/certs/etcd/etcd-server-key.pem

-rw-r--r-- 1 root root 1452 Dec 23 17:07 /gshcmy/certs/etcd/etcd-server.pem

[root@master141 pki]#

master141节点将etcd证书拷贝到其他两个master节点

[root@master141 pki]# MasterNodes='master142 master143'

[root@master141 pki]# for NODE in $MasterNodes; do ssh $NODE "mkdir -pv /gshcmy/certs/etcd/"; done

root@master142's password:

mkdir: created directory '/gshcmy'

mkdir: created directory '/gshcmy/certs'

mkdir: created directory '/gshcmy/certs/etcd/'

root@master143's password:

mkdir: created directory '/gshcmy'

mkdir: created directory '/gshcmy/certs'

mkdir: created directory '/gshcmy/certs/etcd/'

[root@master141 pki]# data_rsync.sh /gshcmy/certs/etcd/etcd-ca-key.pem m

===== rsyncing master142: etcd-ca-key.pem =====

root@master142's password:

命令执行成功!

===== rsyncing master143: etcd-ca-key.pem =====

root@master143's password:

命令执行成功!

[root@master141 pki]# data_rsync.sh /gshcmy/certs/etcd/etcd-ca.pem m

===== rsyncing master142: etcd-ca.pem =====

root@master142's password:

命令执行成功!

===== rsyncing master143: etcd-ca.pem =====

root@master143's password:

命令执行成功!

[root@master141 pki]# data_rsync.sh /gshcmy/certs/etcd/etcd-server-key.pem m

===== rsyncing master142: etcd-server-key.pem =====

root@master142's password:

命令执行成功!

===== rsyncing master143: etcd-server-key.pem =====

root@master143's password:

命令执行成功!

[root@master141 pki]# data_rsync.sh /gshcmy/certs/etcd/etcd-server.pem m

===== rsyncing master142: etcd-server.pem =====

root@master142's password:

命令执行成功!

===== rsyncing master143: etcd-server.pem =====

root@master143's password:

命令执行成功!

启动etcd集群

创建etcd集群各节点配置文件

[root@master141 ~]# mkdir -pv /gshcmy/softwares/etcd[root@master141 ~]# cat > /gshcmy/softwares/etcd/etcd.config.yml <<'EOF'

name: 'master141'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.141:2380'

listen-client-urls: 'https://10.0.0.141:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.141:2380'

advertise-client-urls: 'https://10.0.0.141:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'master141=https://10.0.0.141:2380,master142=https://10.0.0.142:2380,master143=https://10.0.0.143:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/gshcmy/certs/etcd/etcd-server.pem'

key-file: '/gshcmy/certs/etcd/etcd-server-key.pem'

client-cert-auth: true

trusted-ca-file: '/gshcmy/certs/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/gshcmy/certs/etcd/etcd-server.pem'

key-file: '/gshcmy/certs/etcd/etcd-server-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/gshcmy/certs/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF[root@master142 ~]# mkdir -pv /gshcmy/softwares/etcd[root@master142 ~]# cat > /gshcmy/softwares/etcd/etcd.config.yml << 'EOF'

name: 'master142'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.142:2380'

listen-client-urls: 'https://10.0.0.142:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.142:2380'

advertise-client-urls: 'https://10.0.0.142:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'master141=https://10.0.0.141:2380,master142=https://10.0.0.142:2380,master143=https://10.0.0.143:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/gshcmy/certs/etcd/etcd-server.pem'

key-file: '/gshcmy/certs/etcd/etcd-server-key.pem'

client-cert-auth: true

trusted-ca-file: '/gshcmy/certs/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/gshcmy/certs/etcd/etcd-server.pem'

key-file: '/gshcmy/certs/etcd/etcd-server-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/gshcmy/certs/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF[root@master143 ~]# mkdir -pv /gshcmy/softwares/etcd[root@master143 ~]# cat > /gshcmy/softwares/etcd/etcd.config.yml << 'EOF'

name: 'master143'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.143:2380'

listen-client-urls: 'https://10.0.0.143:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.143:2380'

advertise-client-urls: 'https://10.0.0.143:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'master141=https://10.0.0.141:2380,master142=https://10.0.0.142:2380,master143=https://10.0.0.143:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/gshcmy/certs/etcd/etcd-server.pem'

key-file: '/gshcmy/certs/etcd/etcd-server-key.pem'

client-cert-auth: true

trusted-ca-file: '/gshcmy/certs/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/gshcmy/certs/etcd/etcd-server.pem'

key-file: '/gshcmy/certs/etcd/etcd-server-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/gshcmy/certs/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOFmaster[141-143]编写etcd启动脚本(141-143都要有)

cat > /usr/lib/systemd/system/etcd.service <<'EOF'

[Unit]

Description=Gsh cmy's Etcd Service

Documentation=https://coreos.com/etcd/docs/latest/

After=network.target

[Service]

Type=notify

ExecStart=/usr/local/bin/etcd --config-file=/gshcmy/softwares/etcd/etcd.config.yml

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

Alias=etcd3.service

EOF启动etcd集群

systemctl daemon-reload && systemctl enable --now etcd

systemctl status etcd

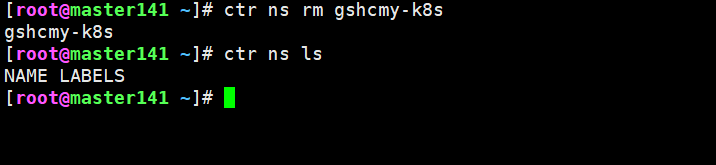

查看状态

etcdctl --endpoints="10.0.0.141:2379,10.0.0.142:2379,10.0.0.143:2379" --cacert=/gshcmy/certs/etcd/etcd-ca.pem --cert=/gshcmy/certs/etcd/etcd-server.pem --key=/gshcmy/certs/etcd/etcd-server-key.pem endpoint status --write-out=table

可以停掉一个节点验证高可用是否成功 systemctl stop etcd

生成k8s组件证书

所有节点(master,worker)创建k8s证书存储目录

mkdir -pv /gshcmy/certs/kubernetes/master141节点生成kubernetes自建ca证书

1.生成证书的CSR文件: 证书签发请求文件,配置了一些域名,公司,单位

[root@master141 pki]# pwd

/gshcmy/certs/pki

[root@master141 pki]# cat > k8s-ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes-manual"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF

[root@master141 pki]# cfssl gencert -initca k8s-ca-csr.json | cfssljson -bare /gshcmy/certs/kubernetes/k8s-ca

2025/12/23 21:10:47 [INFO] generating a new CA key and certificate from CSR

2025/12/23 21:10:47 [INFO] generate received request

2025/12/23 21:10:47 [INFO] received CSR

2025/12/23 21:10:47 [INFO] generating key: rsa-2048

2025/12/23 21:10:47 [INFO] encoded CSR

2025/12/23 21:10:47 [INFO] signed certificate with serial number 172725731318857238528414959316294645059717357847

[root@master141 pki]# ll /gshcmy/certs/kubernetes/

total 20

drwxr-xr-x 2 root root 4096 Dec 23 21:10 ./

drwxr-xr-x 5 root root 4096 Dec 23 20:56 ../

-rw-r--r-- 1 root root 1070 Dec 23 21:10 k8s-ca.csr

-rw------- 1 root root 1679 Dec 23 21:10 k8s-ca-key.pem

-rw-r--r-- 1 root root 1363 Dec 23 21:10 k8s-ca.pemk8s-master01节点基于自建ca证书颁发apiserver相关证书

生成k8s证书的有效期为100年

[root@master141 pki]# cat > k8s-ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "876000h"

}

}

}

}

EOF

生成apiserver证书的CSR文件: 证书签发请求文件,配置了一些域名,公司,单位

[root@master141 pki]# cat > apiserver-csr.json <<EOF

{

"CN": "kube-apiserver",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes-manual"

}

]

}

EOF

自建ca证书生成apiServer的证书文件

[root@master141 pki]# cfssl gencert \

-ca=/gshcmy/certs/kubernetes/k8s-ca.pem \

-ca-key=/gshcmy/certs/kubernetes/k8s-ca-key.pem \

-config=k8s-ca-config.json \

--hostname=10.200.0.1,10.0.0.140,kubernetes,kubernetes.default,kubernetes.default.svc,kubernetes.default.svc.gshcmy,kubernetes.default.svc.gshcmy.com,master141,master142,master143,worker144,worker145,10.0.0.141,10.0.0.142,10.0.0.143,10.0.0.144,10.0.0.145 \

--profile=kubernetes \

apiserver-csr.json | cfssljson -bare /gshcmy/certs/kubernetes/apiserver

[root@master141 pki]# ll /gshcmy/certs/kubernetes/apiserver*

-rw-r--r-- 1 root root 1301 Dec 23 21:30 /gshcmy/certs/kubernetes/apiserver.csr

-rw------- 1 root root 1675 Dec 23 21:30 /gshcmy/certs/kubernetes/apiserver-key.pem

-rw-r--r-- 1 root root 1696 Dec 23 21:30 /gshcmy/certs/kubernetes/apiserver.pem

"10.200.0.1"为svc网段的第一个地址,需要根据自己的场景稍作修改。

"10.0.0.240"是负载均衡器的VIP地址。

"kubernetes,...,kubernetes.default.svc.gshcmy.com"对应的是apiServer解析的A记录。

"10.0.0.241,...,10.0.0.245"对应的是K8S集群的地址。 生成第三方组件与apiServer通信的聚合证书

生成聚合证书的用于自建ca的CSR文件

[root@master141 pki]# cat > front-proxy-ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

}

}

EOF

生成聚合证书的自建ca证书

[root@master141 pki]# cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /gshcmy/certs/kubernetes/front-proxy-ca

[root@master141 pki]# ll /gshcmy/certs/kubernetes/front-proxy-ca*

-rw-r--r-- 1 root root 891 Dec 23 21:37 /gshcmy/certs/kubernetes/front-proxy-ca.csr

-rw------- 1 root root 1675 Dec 23 21:37 /gshcmy/certs/kubernetes/front-proxy-ca-key.pem

-rw-r--r-- 1 root root 1094 Dec 23 21:37 /gshcmy/certs/kubernetes/front-proxy-ca.pem

成聚合证书的用于客户端的CSR文件

[root@master141 pki]# cat > front-proxy-client-csr.json <<EOF

{

"CN": "front-proxy-client",

"key": {

"algo": "rsa",

"size": 2048

}

}

EOF

4 基于聚合证书的自建ca证书签发聚合证书的客户端证书

[root@master141 pki]# cfssl gencert \

-ca=/gshcmy/certs/kubernetes/front-proxy-ca.pem \

-ca-key=/gshcmy/certs/kubernetes/front-proxy-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

front-proxy-client-csr.json | cfssljson -bare /gshcmy/certs/kubernetes/front-proxy-client

[root@master141 pki]# ll /gshcmy/certs/kubernetes/front-proxy-client*

-rw-r--r-- 1 root root 903 Dec 23 22:08 /gshcmy/certs/kubernetes/front-proxy-client.csr

-rw------- 1 root root 1679 Dec 23 22:08 /gshcmy/certs/kubernetes/front-proxy-client-key.pem

-rw-r--r-- 1 root root 1188 Dec 23 22:08 /gshcmy/certs/kubernetes/front-proxy-client.pem

生成controller-manager证书及kubeconfig文件

生成controller-manager的CSR文件

[root@master141 pki]# cat > controller-manager-csr.json <<EOF

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "Kubernetes-manual"

}

]

}

EOF

生成controller-manager证书文件

[root@master141 pki]# cfssl gencert \

-ca=/gshcmy/certs/kubernetes/k8s-ca.pem \

-ca-key=/gshcmy/certs/kubernetes/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

controller-manager-csr.json | cfssljson -bare /gshcmy/certs/kubernetes/controller-manager

[root@master141 pki]# ll /gshcmy/certs/kubernetes/controller-manager*

-rw-r--r-- 1 root root 1082 Dec 23 22:20 /gshcmy/certs/kubernetes/controller-manager.csr

-rw------- 1 root root 1675 Dec 23 22:20 /gshcmy/certs/kubernetes/controller-manager-key.pem

-rw-r--r-- 1 root root 1501 Dec 23 22:20 /gshcmy/certs/kubernetes/controller-manager.pem

创建一个kubeconfig目录

[root@master141 pki]# mkdir -pv /gshcmy/certs/kubeconfig

设置一个集群

[root@master141 pki]# kubectl config set-cluster gshcmy-k8s \

--certificate-authority=/gshcmy/certs/kubernetes/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.140:8443 \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-controller-manager.kubeconfig

设置一个用户项

[root@master141 pki]# kubectl config set-credentials system:kube-controller-manager \

--client-certificate=/gshcmy/certs/kubernetes/controller-manager.pem \

--client-key=/gshcmy/certs/kubernetes/controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-controller-manager.kubeconfig

设置一个上下文环境

[root@master141 pki]# kubectl config set-context system:kube-controller-manager@kubernetes \

--cluster=gshcmy-k8s \

--user=system:kube-controller-manager \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-controller-manager.kubeconfig

使用默认的上下文

[root@master141 pki]# kubectl config use-context system:kube-controller-manager@kubernetes \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-controller-manager.kubeconfig生成scheduler证书及kubeconfig文件

生成scheduler的CSR文件

[root@master141 pki]# cat > scheduler-csr.json <<EOF

{

"CN": "system:kube-scheduler",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-scheduler",

"OU": "Kubernetes-manual"

}

]

}

EOF

生成scheduler证书文件

[root@master141 pki]# cfssl gencert \

-ca=/gshcmy/certs/kubernetes/k8s-ca.pem \

-ca-key=/gshcmy/certs/kubernetes/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

scheduler-csr.json | cfssljson -bare /gshcmy/certs/kubernetes/scheduler

[root@master141 pki]# ll /gshcmy/certs/kubernetes/scheduler*

-rw-r--r-- 1 root root 1058 Dec 23 22:33 /gshcmy/certs/kubernetes/scheduler.csr

-rw------- 1 root root 1675 Dec 23 22:33 /gshcmy/certs/kubernetes/scheduler-key.pem

-rw-r--r-- 1 root root 1476 Dec 23 22:33 /gshcmy/certs/kubernetes/scheduler.pem

设置一个集群

[root@master141 pki]# kubectl config set-cluster gshcmy-k8s \

--certificate-authority=/gshcmy/certs/kubernetes/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.140:8443 \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-scheduler.kubeconfig

设置一个用户项

[root@master141 pki]# kubectl config set-credentials system:kube-scheduler \

--client-certificate=/gshcmy/certs/kubernetes/scheduler.pem \

--client-key=/gshcmy/certs/kubernetes/scheduler-key.pem \

--embed-certs=true \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-scheduler.kubeconfig

设置一个上下文环境

[root@master141 pki]# kubectl config set-context system:kube-scheduler@kubernetes \

--cluster=gshcmy-k8s \

--user=system:kube-scheduler \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-scheduler.kubeconfig

使用默认的上下文

[root@master141 pki]# kubectl config use-context system:kube-scheduler@kubernetes \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-scheduler.kubeconfig

配置k8s集群管理员证书及kubeconfig文件

生成管理员的CSR文件

[root@master141 pki]# cat > admin-csr.json <<EOF

{

"CN": "admin",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:masters",

"OU": "Kubernetes-manual"

}

]

}

EOF

生成k8s集群管理员证书

[root@master141 pki]# cfssl gencert \

-ca=/gshcmy/certs/kubernetes/k8s-ca.pem \

-ca-key=/gshcmy/certs/kubernetes/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

admin-csr.json | cfssljson -bare /gshcmy/certs/kubernetes/admin

[root@master141 pki]# ll /gshcmy/certs/kubernetes/admin*

-rw-r--r-- 1 root root 1025 Dec 23 22:47 /gshcmy/certs/kubernetes/admin.csr

-rw------- 1 root root 1675 Dec 23 22:47 /gshcmy/certs/kubernetes/admin-key.pem

-rw-r--r-- 1 root root 1444 Dec 23 22:47 /gshcmy/certs/kubernetes/admin.pem

设置一个集群

[root@master141 pki]# kubectl config set-cluster gshcmy-k8s \

--certificate-authority=/gshcmy/certs/kubernetes/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.140:8443 \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-admin.kubeconfig

设置一个用户项

[root@master141 pki]# kubectl config set-credentials kube-admin \

--client-certificate=/gshcmy/certs/kubernetes/admin.pem \

--client-key=/gshcmy/certs/kubernetes/admin-key.pem \

--embed-certs=true \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-admin.kubeconfig

设置一个上下文环境

[root@master141 pki]# kubectl config set-context kube-admin@kubernetes \

--cluster=gshcmy-k8s \

--user=kube-admin \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-admin.kubeconfig

使用默认的上下文

[root@master141 pki]# kubectl config use-context kube-admin@kubernetes \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-admin.kubeconfig

创建ServiceAccount

ServiceAccount是k8s一种认证方式,创建ServiceAccount的时候会创建一个与之绑定的secret,这个secret会生成一个token

[root@master141 pki]# openssl genrsa -out /gshcmy/certs/kubernetes/sa.key 2048

基于sa.key创建sa.pub

[root@master141 pki]# openssl rsa -in /gshcmy/certs/kubernetes/sa.key -pubout -out /gshcmy/certs/kubernetes/sa.pub

[root@master141 pki]# ll /gshcmy/certs/kubernetes/sa*

-rw------- 1 root root 1704 Dec 23 22:53 /gshcmy/certs/kubernetes/sa.key

-rw-r--r-- 1 root root 451 Dec 23 22:54 /gshcmy/certs/kubernetes/sa.pubk8s-master01节点K8S组件证书拷贝到其他两个master节点

k8s-master01节点将etcd证书拷贝到其他两个master节点

[root@master141 pki]# data_rsync.sh /gshcmy/certs/kubernetes m

===== rsyncing master142: kubernetes =====

root@master142's password:

命令执行成功!

===== rsyncing master143: kubernetes =====

root@master143's password:

命令执行成功!

[root@master141 pki]# data_rsync.sh /gshcmy/certs/kubeconfig m

===== rsyncing master142: kubeconfig =====

root@master142's password:

命令执行成功!

===== rsyncing master143: kubeconfig =====

root@master143's password:

命令执行成功!

其他两个节点验证文件数量是否正确

[root@master142 ~]# ls /gshcmy/certs/kubernetes | wc -l

23

[root@master142 ~]# ls /gshcmy/certs/kubeconfig | wc -l

3

[root@master143 ~]# ls /gshcmy/certs/kubernetes | wc -l

23

[root@master143 ~]# ls /gshcmy/certs/kubeconfig | wc -l

3高可用组件haproxy+keepalived安装

所有master【k8s-master[01-03]】节点安装高可用组件

温馨提示:

- 对于高可用组件,其实我们也可以单独找两台虚拟机来部署,但我为了节省2台机器,就直接在master节点复用了。

- 如果在云上安装K8S则无安装高可用组件了,毕竟公有云大部分都是不支持keepalived的,可以直接使用云产品,比如阿里的"SLB",腾讯的"ELB"等SAAS产品;

- 推荐使用ELB,SLB有回环的问题,也就是SLB代理的服务器不能反向访问SLB,但是腾讯云修复了这个问题;

所有master

apt-get -y install keepalived haproxy

所有master节点配置haproxy

温馨提示:

- haproxy的负载均衡器监听地址我配置是8443,你可以修改为其他端口,haproxy会用来反向代理各个master组件的地址;

- 如果你真的修改晴一定注意上面的证书配置的kubeconfig文件,也要一起修改,否则就会出现链接集群失败的问题;

具体实操:

2.1 备份配置文件

cp /etc/haproxy/haproxy.cfg{,`date +%F`}

2.2 所有节点的配置文件内容相同

cat > /etc/haproxy/haproxy.cfg << 'EOF'

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-haproxy

bind *:9999

mode http

option httplog

monitor-uri /ruok

frontend gshcmy-k8s

bind 0.0.0.0:8443

bind 127.0.0.1:8443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend gshcmy-k8s

backend gshcmy-k8s

mode tcp

option tcplog

option tcp-check

balance roundrobin

server master141 10.0.0.141:6443 check inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server master142 10.0.0.142:6443 check inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server master143 10.0.0.143:6443 check inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

EOF

所有master节点配置keepalived

温馨提示:

- 注意"interface"字段为你的物理网卡的名称,如果你的网卡是ens33,请将"eth0"修改为"ens33"哟;

- 注意"mcast_src_ip"各master节点的配置均不相同,修改根据实际环境进行修改哟;

- 注意"virtual_ipaddress"指定的是负载均衡器的VIP地址,这个地址也要和kubeconfig文件的Apiserver地址要一致哟;

- 注意"script"字段的脚本用于检测后端的apiServer是否健康;

- 注意"router_id"字段为节点ip,master每个节点配置自己的IP

master141节点创建配置文件

[root@master141 ~]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 10.0.0.141 netmask 255.255.255.0 broadcast 10.0.0.255

inet6 fe80::20c:29ff:feda:2a78 prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:da:2a:78 txqueuelen 1000 (Ethernet)

RX packets 87124 bytes 14793699 (14.7 MB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 87498 bytes 10987285 (10.9 MB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@master141 ~]# cat > /etc/keepalived/keepalived.conf <<'EOF'

! Configuration File for keepalived

global_defs {

router_id 10.0.0.141

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 8443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.0.0.141

nopreempt

authentication {

auth_type PASS

auth_pass gshcmy_k8s

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.0.0.140

}

}

EOF

master142节点创建配置文件

[root@master142 ~]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 10.0.0.142 netmask 255.255.255.0 broadcast 10.0.0.255

inet6 fe80::20c:29ff:feb2:8da0 prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:b2:8d:a0 txqueuelen 1000 (Ethernet)

RX packets 67794 bytes 12957944 (12.9 MB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 67085 bytes 9454780 (9.4 MB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@master142 ~]# cat > /etc/keepalived/keepalived.conf <<EOF

! Configuration File for keepalived

global_defs {

router_id 10.0.0.142

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 8443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.0.0.142

nopreempt

authentication {

auth_type PASS

auth_pass gshcmy_k8s

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.0.0.140

}

}

EOF

master143节点创建配置文件

[root@master143 ~]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 10.0.0.143 netmask 255.255.255.0 broadcast 10.0.0.255

inet6 fe80::20c:29ff:fe55:5971 prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:55:59:71 txqueuelen 1000 (Ethernet)

RX packets 76007 bytes 14125808 (14.1 MB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 75099 bytes 10609607 (10.6 MB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@master143 ~]# cat > /etc/keepalived/keepalived.conf <<EOF

! Configuration File for keepalived

global_defs {

router_id 10.0.0.143

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 8443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.0.0.143

nopreempt

authentication {

auth_type PASS

auth_pass gshcmy_k8s

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.0.0.140

}

}

EOF

所有keepalived节点创建健康检查脚本(141-143)

cat > /etc/keepalived/check_port.sh <<'EOF'

#!/bin/bash

CHK_PORT=$1

if [ -n "$CHK_PORT" ];then

PORT_PROCESS=`ss -lt|grep $CHK_PORT|wc -l`

if [ $PORT_PROCESS -eq 0 ];then

echo "Port $CHK_PORT Is Not Used,End."

systemctl stop keepalived

fi

else

echo "Check Port Cant Be Empty!"

fi

EOF

给权限(141-143)

chmod +x /etc/keepalived/check_port.sh启动keepalived服务并验证

启动keepalived服务

systemctl daemon-reload

systemctl enable --now keepalived

systemctl status keepalived

验证服务是否正常

所有master(141-143)

tee -a /etc/sysctl.conf << EOF

net.ipv6.conf.eth0.disable_ipv6 = 1

net.ipv6.conf.all.disable_ipv6 = 1

net.ipv6.conf.default.disable_ipv6 = 1

EOF

sysctl -p

[root@master(141-143) ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:da:2a:78 brd ff:ff:ff:ff:ff:ff

altname enp2s1

altname ens33

inet 10.0.0.141/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet 10.0.0.140/32 scope global eth0

valid_lft forever preferred_lft forever

3: tunl0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN group default qlen 1000

link/ipip 0.0.0.0 brd 0.0.0.0

[root@master141 ~]# ping 10.0.0.140

PING 10.0.0.140 (10.0.0.140) 56(84) bytes of data.

64 bytes from 10.0.0.140: icmp_seq=1 ttl=64 time=0.016 ms

64 bytes from 10.0.0.140: icmp_seq=2 ttl=64 time=0.029 ms找一个节点停止

[root@master141 ~]# systemctl stop keepalived

[root@master141 ~]# ping 10.0.0.140

PING 10.0.0.140 (10.0.0.140) 56(84) bytes of data.

64 bytes from 10.0.0.140: icmp_seq=1 ttl=64 time=0.697 ms

64 bytes from 10.0.0.140: icmp_seq=2 ttl=64 time=0.529 ms

64 bytes from 10.0.0.140: icmp_seq=3 ttl=64 time=0.504 ms

64 bytes from 10.0.0.140: icmp_seq=4 ttl=64 time=0.338 ms

[root@master141 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:da:2a:78 brd ff:ff:ff:ff:ff:ff

altname enp2s1

altname ens33

inet 10.0.0.141/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

3: tunl0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN group default qlen 1000

link/ipip 0.0.0.0 brd 0.0.0.0

验证vip是否飘逸到其他节点,果不其然,真的飘逸到其他master节点

[root@master143 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:55:59:71 brd ff:ff:ff:ff:ff:ff

altname enp2s1

altname ens33

inet 10.0.0.143/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet 10.0.0.140/32 scope global eth0

valid_lft forever preferred_lft forever

3: tunl0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN group default qlen 1000

link/ipip 0.0.0.0 brd 0.0.0.0

验证haproxy服务并验证

所有节点启动haproxy服务

systemctl enable --now haproxy

systemctl restart haproxy

systemctl status haproxy

所有节点启动keepalived

systemctl start keepalived

基于telnet验证haporxy是否正常

[root@k8s-master02 ~]# telnet 10.0.0.140 8443

由于刚才141停止所以要执行下方命令

[root@master141 ~]# ip neigh del 10.0.0.140 dev eth0

[root@master141 ~]# arp -an | grep "10.0.0.140"

[root@master141 ~]# arping -c 2 -I eth0 10.0.0.140

Command 'arping' not found, but can be installed with:

apt install iputils-arping # version 3:20211215-1ubuntu0.1, or

apt install arping # version 2.22-1

[root@master141 ~]# arp -an | grep "10.0.0.140"

[root@master141 ~]# telnet 10.0.0.140 8443

Trying 10.0.0.140...

Connected to 10.0.0.140.

Escape character is '^]'.

Connection closed by foreign host.

基于webUI进行验证

[root@master141 ~]# curl http://10.0.0.140:9999/ruok

<html><body><h1>200 OK</h1>

Service ready.

</body></html>部署ApiServer组件

- "--advertise-address"是对应的master节点的IP地址;

- "--service-cluster-ip-range"对应的是svc的网段

- "--service-node-port-range"对应的是svc的NodePort端口范围;

- "--etcd-servers"指定的是etcd集群地址

配置文件参考链接:

https://kubernetes.io/zh-cn/docs/reference/command-line-tools-reference/kube-apiserver/

master141节点

创建master141节点的配置文件

cat > /usr/lib/systemd/system/kube-apiserver.service << 'EOF'

[Unit]

Description=gshcmy's Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--allow_privileged=true \

--advertise-address=10.0.0.141 \

--service-cluster-ip-range=10.200.0.0/16 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.141:2379,https://10.0.0.142:2379,https://10.0.0.143:2379 \

--etcd-cafile=/gshcmy/certs/etcd/etcd-ca.pem \

--etcd-certfile=/gshcmy/certs/etcd/etcd-server.pem \

--etcd-keyfile=/gshcmy/certs/etcd/etcd-server-key.pem \

--client-ca-file=/gshcmy/certs/kubernetes/k8s-ca.pem \

--tls-cert-file=/gshcmy/certs/kubernetes/apiserver.pem \

--tls-private-key-file=/gshcmy/certs/kubernetes/apiserver-key.pem \

--kubelet-client-certificate=/gshcmy/certs/kubernetes/apiserver.pem \

--kubelet-client-key=/gshcmy/certs/kubernetes/apiserver-key.pem \

--service-account-key-file=/gshcmy/certs/kubernetes/sa.pub \

--service-account-signing-key-file=/gshcmy/certs/kubernetes/sa.key \

--service-account-issuer=https://kubernetes.default.svc.gshcmy.com \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/gshcmy/certs/kubernetes/front-proxy-ca.pem \

--proxy-client-cert-file=/gshcmy/certs/kubernetes/front-proxy-client.pem \

--proxy-client-key-file=/gshcmy/certs/kubernetes/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF

启动服务

[root@master141 ~]# systemctl daemon-reload && systemctl enable --now kube-apiserver

[root@master141 ~]# systemctl status kube-apiserver

[root@master141 ~]# ss -ntl | grep 6443

LISTEN 0 16384 *:6443 *:* master142节点

创建master142节点的配置文件

cat > /usr/lib/systemd/system/kube-apiserver.service << 'EOF'

[Unit]

Description=gshcmy's Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--advertise-address=10.0.0.142 \

--service-cluster-ip-range=10.200.0.0/16 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.141:2379,https://10.0.0.142:2379,https://10.0.0.143:2379 \

--etcd-cafile=/gshcmy/certs/etcd/etcd-ca.pem \

--etcd-certfile=/gshcmy/certs/etcd/etcd-server.pem \

--etcd-keyfile=/gshcmy/certs/etcd/etcd-server-key.pem \

--client-ca-file=/gshcmy/certs/kubernetes/k8s-ca.pem \

--tls-cert-file=/gshcmy/certs/kubernetes/apiserver.pem \

--tls-private-key-file=/gshcmy/certs/kubernetes/apiserver-key.pem \

--kubelet-client-certificate=/gshcmy/certs/kubernetes/apiserver.pem \

--kubelet-client-key=/gshcmy/certs/kubernetes/apiserver-key.pem \

--service-account-key-file=/gshcmy/certs/kubernetes/sa.pub \

--service-account-signing-key-file=/gshcmy/certs/kubernetes/sa.key \

--service-account-issuer=https://kubernetes.default.svc.gshcmy.com \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/gshcmy/certs/kubernetes/front-proxy-ca.pem \

--proxy-client-cert-file=/gshcmy/certs/kubernetes/front-proxy-client.pem \

--proxy-client-key-file=/gshcmy/certs/kubernetes/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF

启动服务

启动服务

[root@master142 ~]# systemctl daemon-reload && systemctl enable --now kube-apiserver

[root@master142~]# systemctl status kube-apiserver

[root@master142~]# ss -ntl | grep 6443

LISTEN 0 16384 *:6443 *:* master143节点

创建master143节点的配置文件

cat > /usr/lib/systemd/system/kube-apiserver.service << 'EOF'

[Unit]

Description=gshcmy's Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--advertise-address=10.0.0.143 \

--service-cluster-ip-range=10.200.0.0/16 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.141:2379,https://10.0.0.142:2379,https://10.0.0.143:2379 \

--etcd-cafile=/gshcmy/certs/etcd/etcd-ca.pem \

--etcd-certfile=/gshcmy/certs/etcd/etcd-server.pem \

--etcd-keyfile=/gshcmy/certs/etcd/etcd-server-key.pem \

--client-ca-file=/gshcmy/certs/kubernetes/k8s-ca.pem \

--tls-cert-file=/gshcmy/certs/kubernetes/apiserver.pem \

--tls-private-key-file=/gshcmy/certs/kubernetes/apiserver-key.pem \

--kubelet-client-certificate=/gshcmy/certs/kubernetes/apiserver.pem \

--kubelet-client-key=/gshcmy/certs/kubernetes/apiserver-key.pem \

--service-account-key-file=/gshcmy/certs/kubernetes/sa.pub \

--service-account-signing-key-file=/gshcmy/certs/kubernetes/sa.key \

--service-account-issuer=https://kubernetes.default.svc.gshcmy.com \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/gshcmy/certs/kubernetes/front-proxy-ca.pem \

--proxy-client-cert-file=/gshcmy/certs/kubernetes/front-proxy-client.pem \

--proxy-client-key-file=/gshcmy/certs/kubernetes/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

启动服务

[root@master143 ~]# systemctl daemon-reload && systemctl enable --now kube-apiserver

[root@master143 ~]# systemctl status kube-apiserver

[root@master143 ~]# ss -ntl | grep 6443

LISTEN 0 16384 *:6443 *:*部署ControlerManager组件

所有节点(master)创建配置文件

温馨提示:

- "--cluster-cidr"是Pod的网段地址,我们可以自行修改。

配置文件参考链接:

https://kubernetes.io/zh-cn/docs/reference/command-line-tools-reference/kube-controller-manager/所有节点的controller-manager组件配置文件相同: (前提是证书文件存放的位置也要相同哟!)

cat > /usr/lib/systemd/system/kube-controller-manager.service << 'EOF'

[Unit]

Description=gshcmy's Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-controller-manager \

--v=2 \

--root-ca-file=/gshcmy/certs/kubernetes/k8s-ca.pem \

--cluster-signing-cert-file=/gshcmy/certs/kubernetes/k8s-ca.pem \

--cluster-signing-key-file=/gshcmy/certs/kubernetes/k8s-ca-key.pem \

--service-account-private-key-file=/gshcmy/certs/kubernetes/sa.key \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-controller-manager.kubeconfig \

--leader-elect=true \

--use-service-account-credentials=true \

--node-monitor-grace-period=40s \

--node-monitor-period=5s \

--controllers=*,bootstrapsigner,tokencleaner \

--allocate-node-cidrs=true \

--cluster-cidr=10.100.0.0/16 \

--requestheader-client-ca-file=/gshcmy/certs/kubernetes/front-proxy-ca.pem \

--node-cidr-mask-size=24

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

启动controller-manager服务

systemctl daemon-reload

systemctl enable --now kube-controller-manager

systemctl status kube-controller-manager

ss -ntl | grep 10257

部署Scheduler组件

配置文件参考链接:

https://kubernetes.io/zh-cn/docs/reference/command-line-tools-reference/kube-scheduler/所有节点(master)创建配置文件

所有节点的controller-manager组件配置文件相同: (前提是证书文件存放的位置也要相同)

cat > /usr/lib/systemd/system/kube-scheduler.service <<'EOF'

[Unit]

Description=gshcmy's Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-scheduler \

--v=2 \

--leader-elect=true \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-scheduler.kubeconfig

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

启动scheduler服务

systemctl daemon-reload

systemctl enable --now kube-scheduler

systemctl status kube-scheduler

ss -ntl | grep 10259

LISTEN 0 16384 *:10259 *:*创建Bootstrapping自动颁发kubelet证书配置

master141节点创建bootstrap-kubelet.kubeconfig文件

温馨提示:

- "--server"只想的是负载均衡器的IP地址,由负载均衡器对master节点进行反向代理哟。

- "--token"也可以自定义,但也要同时修改"bootstrap"的Secret的"token-id"和"token-secret"对应值;设置集群master141

kubectl config set-cluster gshcmy-k8s \

--certificate-authority=/gshcmy/certs/kubernetes/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.140:8443 \

--kubeconfig=/gshcmy/certs/kubeconfig/bootstrap-kubelet.kubeconfig

创建用户

kubectl config set-credentials tls-bootstrap-token-user \

--token=gshcmy.gshcmy \

--kubeconfig=/gshcmy/certs/kubeconfig/bootstrap-kubelet.kubeconfig

将集群和用户进行绑定

kubectl config set-context tls-bootstrap-token-user@kubernetes \

--cluster=gshcmy-k8s \

--user=tls-bootstrap-token-user \

--kubeconfig=/gshcmy/certs/kubeconfig/bootstrap-kubelet.kubeconfig

配置默认的上下文

kubectl config use-context tls-bootstrap-token-user@kubernetes \

--kubeconfig=/gshcmy/certs/kubeconfig/bootstrap-kubelet.kubeconfig

所有master节点拷贝管理证书

所有master都拷贝管理员的证书文件

[root@master141-143 ~]# mkdir -p /root/.kube

[root@master141-143 ~]# cp /gshcmy/certs/kubeconfig/kube-admin.kubeconfig /root/.kube/config

[root@master141-143~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

etcd-0 Healthy ok

scheduler Healthy ok

scheduler Healthy ok

查看集群状态,如果未来cs组件移除了也没关系,我们可以使用"cluster-info"子命令查看集群状态

[root@master141 ~]# kubectl cluster-info

Kubernetes control plane is running at https://10.0.0.140:8443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

创建bootstrap-secret授权

创建配bootstrap-secret文件用于授权

cat > bootstrap-secret.yaml <<EOF

apiVersion: v1

kind: Secret

metadata:

name: bootstrap-token-gshcmy

namespace: kube-system

type: bootstrap.kubernetes.io/token

stringData:

description: "The default bootstrap token generated by 'kubelet '."

token-id: gshcmy

token-secret: gshcmy

usage-bootstrap-authentication: "true"

usage-bootstrap-signing: "true"

auth-extra-groups: system:bootstrappers:default-node-token,system:bootstrappers:worker,system:bootstrappers:ingress

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubelet-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node-bootstrapper

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:nodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-certificate-rotation

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:selfnodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:nodes

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kube-apiserver

EOF

应用bootstrap-secret配置文件

[root@master141 ~]# kubectl apply -f bootstrap-secret.yaml

secret/bootstrap-token-gshcmy created

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

clusterrolebinding.rbac.authorization.k8s.io/node-autoapprove-bootstrap created

clusterrolebinding.rbac.authorization.k8s.io/node-autoapprove-certificate-rotation created

clusterrole.rbac.authorization.k8s.io/system:kube-apiserver-to-kubelet created

clusterrolebinding.rbac.authorization.k8s.io/system:kube-apiserver created部署worker节点之kubelet启动实战

复制证书

k8s-master01节点分发证书到其他节点

[root@master141 ~]# cd /gshcmy/certs/

for NODE in master142 master143 worker144 worker145; do

echo $NODE

ssh $NODE "mkdir -p /gshcmy/certs/kube{config,rnetes}"

for FILE in k8s-ca.pem k8s-ca-key.pem front-proxy-ca.pem; do

scp kubernetes/$FILE $NODE:/gshcmy/certs/kubernetes/${FILE}

done

scp kubeconfig/bootstrap-kubelet.kubeconfig $NODE:/gshcmy/certs/kubeconfig/

done

worker节点验证

[root@worker145 certs]# ll /gshcmy/ -R

/gshcmy/:

total 12

drwxr-xr-x 3 root root 4096 Dec 23 20:56 ./

drwxr-xr-x 21 root root 4096 Dec 23 20:56 ../

drwxr-xr-x 4 root root 4096 Dec 24 21:38 certs/

/gshcmy/certs:

total 16

drwxr-xr-x 4 root root 4096 Dec 24 21:38 ./

drwxr-xr-x 3 root root 4096 Dec 23 20:56 ../

drwxr-xr-x 2 root root 4096 Dec 24 21:40 kubeconfig/

drwxr-xr-x 2 root root 4096 Dec 24 21:40 kubernetes/

/gshcmy/certs/kubeconfig:

total 12

drwxr-xr-x 2 root root 4096 Dec 24 21:40 ./

drwxr-xr-x 4 root root 4096 Dec 24 21:38 ../

-rw------- 1 root root 2223 Dec 24 21:40 bootstrap-kubelet.kubeconfig

/gshcmy/certs/kubernetes:

total 20

drwxr-xr-x 2 root root 4096 Dec 24 21:40 ./

drwxr-xr-x 4 root root 4096 Dec 24 21:38 ../

-rw-r--r-- 1 root root 1094 Dec 24 21:40 front-proxy-ca.pem

-rw------- 1 root root 1679 Dec 24 21:40 k8s-ca-key.pem

-rw-r--r-- 1 root root 1363 Dec 24 21:40 k8s-ca.pem

启动kubelet服务

- 在"10-kubelet.con"文件中使用"--kubeconfig"指定的"kubelet.kubeconfig"文件并不存在,这个证书文件后期会自动生成;

- 对于"clusterDNS"是NDS地址,我们可以自定义,比如"10.200.0.154";

- “clusterDomain”对应的是域名信息,要和我们设计的集群保持一致,比如"gshcmy.com";

- "10-kubelet.conf"文件中的"ExecStart="需要写2次,否则可能无法启动kubelet;

所有节点创建工作目录(master,worker)

mkdir -p /var/lib/kubelet /var/log/kubernetes /etc/systemd/system/kubelet.service.d /etc/kubernetes/manifests/

cat > /etc/kubernetes/kubelet-conf.yml <<'EOF'

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /gshcmy/certs/kubernetes/k8s-ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

cgroupDriver: systemd

cgroupsPerQOS: true

clusterDNS:

- 10.200.0.254

clusterDomain: gshcmy.com

containerLogMaxFiles: 5

containerLogMaxSize: 10Mi

contentType: application/vnd.kubernetes.protobuf

cpuCFSQuota: true

cpuManagerPolicy: none

cpuManagerReconcilePeriod: 10s

enableControllerAttachDetach: true

enableDebuggingHandlers: true

enforceNodeAllocatable:

- pods

eventBurst: 10

eventRecordQPS: 5

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

evictionPressureTransitionPeriod: 5m0s

failSwapOn: true

fileCheckFrequency: 20s

hairpinMode: promiscuous-bridge

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 20s

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

imageMinimumGCAge: 2m0s

iptablesDropBit: 15

iptablesMasqueradeBit: 14

kubeAPIBurst: 10

kubeAPIQPS: 5

makeIPTablesUtilChains: true

maxOpenFiles: 1000000

maxPods: 110

nodeStatusUpdateFrequency: 10s

oomScoreAdj: -999

podPidsLimit: -1

registryBurst: 10

registryPullQPS: 5

resolvConf: /etc/resolv.conf

rotateCertificates: true

runtimeRequestTimeout: 2m0s

serializeImagePulls: true

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 4h0m0s

syncFrequency: 1m0s

volumeStatsAggPeriod: 1m0s

EOF

所有节点配置kubelet service

cat > /usr/lib/systemd/system/kubelet.service <<'EOF'

[Unit]

Description=gshcmy's Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=containerd.service

Requires=containerd.service

[Service]

ExecStart=/usr/local/bin/kubelet

Restart=always

StartLimitInterval=0

RestartSec=10

[Install]

WantedBy=multi-user.target

EOF

所有节点配置kubelet service的配置文件

cat > /etc/systemd/system/kubelet.service.d/10-kubelet.conf <<'EOF'

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/gshcmy/certs/kubeconfig/bootstrap-kubelet.kubeconfig --kubeconfig=/gshcmy/certs/kubeconfig/kubelet.kubeconfig"

Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml"

Environment="KUBELET_SYSTEM_ARGS=--container-runtime-endpoint=unix:///run/containerd/containerd.sock"

Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' "

ExecStart=

ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS

EOF

启动所有节点kubelet

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet

出现错误解决方案

如果出现报错:

nodes is forbidden: User \"system:anonymous\" cannot create resource \"nodes\" in API group \"\" at the cluster scope" node="k8s-master141"

解决方案:

[root@master143 ~]# cat test-rbac.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: gshcmy-kubelet-rbac

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: system:anonymous

[root@master143 ~]#

[root@master143 ~]# kubectl apply -f test-rbac.yaml

clusterrolebinding.rbac.authorization.k8s.io/oldboyedu-kubelet-rbac created

[root@master143 ~]# 部署worker节点之kube-proxy服务

[root@master141 pki]# cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-proxy",

"OU": "Kubernetes-manual"

}

]

}

EOF

创建kube-proxy需要的证书文件

[root@master141 pki]# cfssl gencert \

-ca=/gshcmy/certs/kubernetes/k8s-ca.pem \

-ca-key=/gshcmy/certs/kubernetes/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

kube-proxy-csr.json | cfssljson -bare /gshcmy/certs/kubernetes/kube-proxy

[root@master141 pki]# ll /gshcmy/certs/kubernetes/kube-proxy*

-rw-r--r-- 1 root root 1045 Dec 24 22:45 /gshcmy/certs/kubernetes/kube-proxy.csr

-rw------- 1 root root 1679 Dec 24 22:45 /gshcmy/certs/kubernetes/kube-proxy-key.pem

-rw-r--r-- 1 root root 1464 Dec 24 22:45 /gshcmy/certs/kubernetes/kube-proxy.pem

设置集群

[root@master141 pki]# kubectl config set-cluster gshcmy-k8s \

--certificate-authority=/gshcmy/certs/kubernetes/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.140:8443 \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-proxy.kubeconfig

设置一个用户项

[root@master141 pki]# kubectl config set-credentials system:kube-proxy \

--client-certificate=/gshcmy/certs/kubernetes/kube-proxy.pem \

--client-key=/gshcmy/certs/kubernetes/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-proxy.kubeconfig

设置一个上下文环境

[root@master141 pki]# kubectl config set-context kube-proxy@kubernetes \

--cluster=gshcmy-k8s \

--user=system:kube-proxy \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-proxy.kubeconfig

使用默认的上下文

[root@master141 pki]# kubectl config use-context kube-proxy@kubernetes \

--kubeconfig=/gshcmy/certs/kubeconfig/kube-proxy.kubeconfig

将kube-proxy的systemd Service文件发送到其他节点

[root@master141 pki]# for NODE in master142 master143 worker144 worker145; do

echo $NODE

scp /gshcmy/certs/kubeconfig/kube-proxy.kubeconfig $NODE:/gshcmy/certs/kubeconfig/

done

所有节点创建kube-proxy.conf配置文件

cat > /etc/kubernetes/kube-proxy.yml << EOF

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

bindAddress: 0.0.0.0

metricsBindAddress: 127.0.0.1:10249

clientConnection:

acceptConnection: ""

burst: 10

contentType: application/vnd.kubernetes.protobuf

kubeconfig: /gshcmy/certs/kubeconfig/kube-proxy.kubeconfig

qps: 5

clusterCIDR: 10.100.0.0/16

configSyncPeriod: 15m0s

conntrack:

max: null

maxPerCore: 32768

min: 131072

tcpCloseWaitTimeout: 1h0m0s

tcpEstablishedTimeout: 24h0m0s

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: ""

iptables:

masqueradeAll: false

masqueradeBit: 14

minSyncPeriod: 0s

ipvs:

masqueradeAll: true

minSyncPeriod: 5s

scheduler: "rr"

syncPeriod: 30s

mode: "ipvs"

nodeProtAddress: null

oomScoreAdj: -999

portRange: ""

udpIdelTimeout: 250ms

EOF

所有节点使用systemd管理kube-proxy

cat > /usr/lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=gshcmy's Kubernetes Proxy

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yml \

--v=2

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

所有节点启动kube-proxy

systemctl daemon-reload && systemctl enable --now kube-proxy

systemctl status kube-proxy

ss -ntl |grep 10249

网络插件calico部署案例

参考链接:

https://docs.tigera.io/calico/latest/getting-started/kubernetes/quickstart1.下载资源清单

[root@master142 ~]# wget https://raw.githubusercontent.com/projectcalico/calico/v3.28.0/manifests/tigera-operator.yaml

[root@master142 ~]# wget https://raw.githubusercontent.com/projectcalico/calico/v3.28.0/manifests/custom-resources.yaml@yuanbao

根据自己的K8S情况修改Pod网段

[root@master142 ~]# grep cidr custom-resources.yaml

cidr: 192.168.0.0/16

[root@master142 ~]#

[root@master142 ~]# sed -i '/cidr/s#192.168#10.100#' custom-resources.yaml

[root@master142 ~]#

[root@master142 ~]# grep cidr custom-resources.yaml

cidr: 10.100.0.0/16

部署calico

[root@master142 ~]# kubectl create -f tigera-operator.yaml

[root@master142 ~]#

[root@master142 ~]# kubectl create -f custom-resources.yaml

查看calico是否部署成功

[root@master141 pki]# kubectl get pods -A -o wide

温馨提示:

可能会出现镜像下载失败的情况,因此需要手动拉取镜像!

卸载calico

[root@master142 ~]# kubectl delete -f custom-resources.yaml -f tigera-operator.yaml

部署Flannel

下载资源清单

[root@master141 ~]# wget https://github.com/flannel-io/flannel/releases/latest/download/kube-flannel.ym

修改资源清单

[root@k8s-master01 ~]# grep 16 kube-flannel.yml

"Network": "10.144.0.0/16",

[root@k8s-master01 ~]# sed -i '/Network/s#144#100#' kube-flannel.yml

[root@k8s-master01 ~]# grep 16 kube-flannel.yml

"Network": "10.100.0.0/16",

[root@k8s-master01 ~]# grep image kube-flannel.yml

image: docker.io/flannel/flannel:v0.25.4

image: docker.io/flannel/flannel-cni-plugin:v1.4.1-flannel1

image: docker.io/flannel/flannel:v0.25.4

[root@k8s-master01 ~]# sed -i 's#docker.io/flannel/flannel:v0.25.4#docker.io/flannel/flannel:v0.25.3#' kube-flannel.yml

[root@k8s-master01 ~]# grep image kube-flannel.yml

image: docker.io/flannel/flannel:v0.25.3

image: docker.io/flannel/flannel-cni-plugin:v1.4.1-flannel1

image: docker.io/flannel/flannel:v0.25.3

部署Flannel

kubectl apply -f kube-flannel.yml

查看flannel 组件

kubectl get pods -A -o wide

查看集群是否正常

kubectl get nodes